Author: Eugene Jang | S2W AI Team

This paper was accepted at ACL (Association for Computational Linguistics) 2023.

View here → https://arxiv.org/pdf/2305.08596.pdf

Photo by Matt Nelson on Unsplash

Photo by Matt Nelson on UnsplashIntroduction

We’re excited to talk about our paper [DarkBERT: A Language Model for the Dark Side of the Internet](https://arxiv.org/pdf/2305.08596.pdf), which was accepted into ACL 2023. Developed by the S2W AI Team (Eugene Jang, Jian Cui, Jin-Woo Chung, Yongjae Lee) in collaboration with KAIST (Korea Advanced Institute of Science and Technology), DarkBERT is the latest addition to S2W’s dark web research.

DarkBERT is a transformer-based encoder model, based on [RoBERTa](https://arxiv.org/abs/1907.11692). Encoder models represent natural language text into semantic representation vectors, which can be used for a multitude of tasks. DarkBERT is unique in that it was trained on dark web data, which allows it to outperform counterpart models on tasks of monitoring or interpreting dark web content.

Background

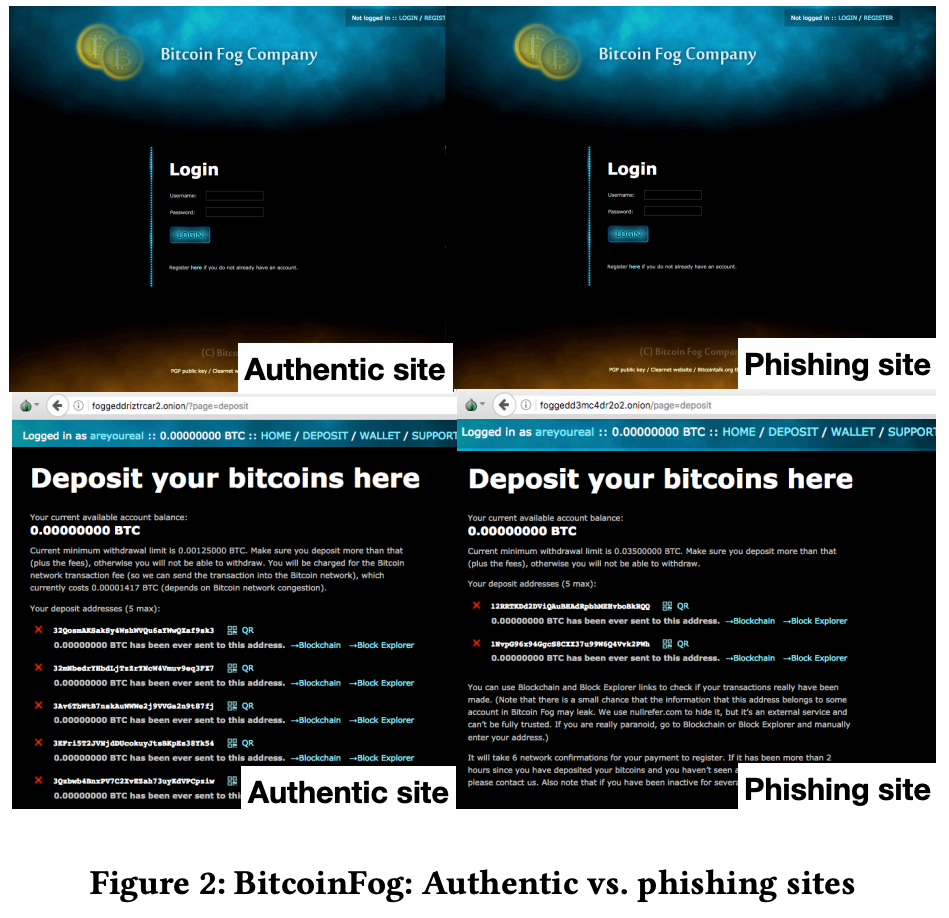

The dark web is a part of the internet that requires specific protocols. These protocols allow the dark web to be anonymous, difficult to access, and difficult to control. These characteristics are often exploited by cybercriminals, who use it to host underground markets and share illegal content. The same characteristics also provide challenges for efforts to monitor the dark web. S2W has a history of monitoring and researching the dark web, bringing insights to the [phishing] (https://nss.kaist.ac.kr/papers/yoon-www2019.pdf) (Doppelgängers on the Dark Web: A Large-scale Assessment on Phishing Hidden Web Services) and [language](https://aclanthology.org/2022.naacl-main.412.pdf) (Shedding New Light on the Language of the Dark Web) of the dark web. You can also find updates on notable incidents on the dark web on this blog.

Figure taken from Doppelgängers on the Dark Web: A Large-scale Assessment on Phishing Hidden Web Services (WWW 2019)

Figure taken from Doppelgängers on the Dark Web: A Large-scale Assessment on Phishing Hidden Web Services (WWW 2019)DarkBERT

Pretrained language models (PLM) have been very powerful, but their effectiveness on the dark web has been [challenged](https://arxiv.org/abs/2201.05613?context=cs.LG). After all, the languages of the dark web and surface web are quite [different](https://aclanthology.org/P19-1419/). Will BERT, trained on the surface web, be optimized to understand dark web language? What if we trained a BERT-like transformer model on the dark web domain.

A critical challenge in creating a pre-trained language model (PLM) is getting the training corpus. The dark web can be notoriously difficult to capture, but S2W’s collection capabilities allowed us to get a sizable collection of dark web text. From our previous [research on dark web language](https://aclanthology.org/2022.naacl-main.412.pdf), we realized that parts of the data could be unsuitable for training. Therefore, we filter the corpus by removing low Information pages, balancing according to category, and deduplicating pages. We also utilize preprocessing to anonymize common identifiers and potentially sensitive information. In the end we had an 5.83 GB unprocessed corpus and a 5.20GB processed corpus.

DarkBERT was trained starting with the RoBERTa base model, which was trained on more data for a longer time than BERT. We follow RoBERTa’s hyperparameters, and follow RoBERTa by training on the MLM task. We monitored loss and stopped training around 20K steps.

In total, training DarkBERT for approximately 15 days on 8 NVIDIA A100 GPUs. The model is available at request so you don’t have to.

Conclusion

Next time, we’ll talk about the experiments and how DarkBERT achieved high performance in a number of dark web tasks.

- Homepage: https://s2w.inc

- Facebook: https://www.facebook.com/S2WLAB

- Twitter: https://twitter.com/S2W_Official

[Part1] Getting to know DarkBERT: A Language Model for the Dark Side of the Internet was originally published in S2W BLOG on Medium, where people are continuing the conversation by highlighting and responding to this story.

Article Link: [Part1] Getting to know DarkBERT: A Language Model for the Dark Side of the Internet | by S2W | S2W BLOG | Medium