In general I try to limit this blog to posts that focus on generally-applicable techniques in cryptography. That is, I don’t focus on the deeply wonky. But this post is going to be an exception. Specifically, I’m going to talk about a topic that most “typical” implementers don’t — and shouldn’t — think about.

Specifically: I’m going to talk about various techniques for making public key encryption schemes chosen ciphertext secure. I see this as the kind of post that would have saved me ages of reading when I was a grad student, so I figured it wouldn’t hurt to write it all down.

Background: CCA(1/2) security

Early (classical) ciphers used a relatively weak model of security, if they used one at all. That is, the typical security model for an encryption scheme was something like the following:

- I generate an encryption key (or keypair for public-key encryption)

- I give you the encryption of some message of my choice

- You “win” if you can decrypt it

This is obviously not a great model in the real world, for several reasons. First off, in some cases the attacker knows a lot about the message to be decrypted. For example: it may come from a small space (like a set of playing cards). For this reason we require a stronger definition like “semantic security” that assumes the attacker can choose the plaintext distribution, and can also obtain the encryption of messages of his/her own choice. I’ve written more about this here.

More relevant to this post, another limitation of the above game is that — in some real-world examples — the attacker has even more power. That is: in addition to obtaining the encryption of chosen plaintexts, they may be able to convince the secret keyholder to decrypt chosen ciphertexts of their choice.

The latter attack is called a chosen-ciphertext (CCA) attack.

At first blush this seems like a really stupid model. If you can ask the keyholder to decrypt chosen ciphertexts, then isn’t the scheme just obviously broken? Can’t you just decrypt anything you want?

The answer, it turns out, is that there are many real-life examples where the attacker has decryption capability, but the scheme isn’t obviously broken. For example:

- Sometimes an attacker can decrypt a limited set of ciphertexts (for example, because someone leaves the decryption machine unattended at lunchtime.) The question then is whether they can learn enough from this access to decrypt other ciphertexts that are generated after she loses access to the decryption machine — for example, messages that are encrypted after the operator comes back from lunch.

- Sometimes an attacker can submit any ciphertext she wants — but will only obtain a partial decryption of the ciphertext. For example, she might learn only a single bit of information such as “did this ciphertext decrypt correctly”. The question, then, is whether she can leverage this tiny amount of data to fully decrypt some ciphertext of her choosing.

The first example is generally called a “non-adaptive” chosen ciphertext attack, or a CCA1 attack (and sometimes, historically, a “lunchtime” attack). There are a few encryption schemes that totally fall apart under this attack — the most famous textbook example is Rabin’s public key encryption scheme, which allows you to recover the full secret key from just a single chosen-ciphertext decryption.

The more powerful second example is generally referred to as an “adaptive” chosen ciphertext attack, or a CCA2 attack. The term refers to the idea that the attacker can select the ciphertexts they try to decrypt based on seeing a specific ciphertext that they want to attack, and by seeing the answers to specific decryption queries.

In this article we’re going to use the more powerful “adaptive” (CCA2) definition, because that subsumes the CCA1 definition. We’re also going to focus primarily on public-key encryption.

With this in mind, here is the intuitive definition of the experiment we want a CCA2 public-key encryption scheme to be able to survive:

- I generate an encryption keypair for a public-key scheme and give you the public key.

- You can send me (sequentially and adaptively) many ciphertexts, which I will decrypt with my secret key. I’ll give you the result of each decryption.

- Eventually you’ll send me a pair of messages (of equal length)

and I’ll pick a bit

at random, and return to you the encryption of

, which I will denote as

.

- You’ll repeat step (2), sending me ciphertexts to decrypt. If you send me

I’ll reject your attempt. But I’ll decrypt any other ciphertext you send me, even if it’s only slightly different from

.

- The attacker outputs their guess

. They “win” the game if

.

We say that our scheme is secure if the attacker wins only with a significantly greater probability than they would win with if they simply guessed at random. Since they can win this game with probability 1/2 just by guessing randomly, that means we want (Probability attacker wins the game) – 1/2 to be “very small” (typically a negligible function of the security parameter).

You should notice two things about this definition. First, it gives the attacker the full decryption of any ciphertext they send me. This is obviously much more powerful than just giving the attacker a single bit of information, as we mentioned in the example further above. But note that powerful is good. If our scheme can remain secure in this powerful experiment, then clearly it will be secure in a setting where the attacker gets strictly less information from each decryption query.

The second thing you should notice is that we impose a single extra condition in step (4), namely that the attacker cannot ask us to decrypt . We do this only to prevent the game from being “trivial” — if we did not impose this requirement, the attacker could always just hand us back

to decrypt, and they would always learn the value of

.

(Notice as well that we do not give the attacker the ability to request encryptions of chosen plaintexts. We don’t need to do that in the public key encryption version of this game, because we’re focusing exclusively on public-key encryption here — since the attacker has the public key, she can encrypt anything she wants without my help.)

With definitions out of the way, let’s talk a bit about how we achieve CCA2 security in real schemes.

A quick detour: symmetric encryption

This post is mainly going to focus on public-key encryption, because that’s actually the problem that’s challenging and interesting to solve. It turns out that achieving CCA2 for symmetric-key encryption is really easy. Let me briefly explain why this is, and why the same ideas don’t work for public-key encryption.

(To explain this, we’ll need to slightly tweak the CCA2 definition above to make it work in the symmetric setting. The changes here are small: we won’t give the attacker a public key in step (1), and at steps (2) and (4) we will allow the attacker to request the encryption of chosen plaintexts as well as the decryption.)

The first observation is that many common encryption schemes — particularly, the widely-used cipher modes of operation like CBC and CTR — are semantically secure in a model where the attacker does not have the ability to decrypt chosen ciphertexts. However, these same schemes break completely in the CCA2 model.

The simple reason for this is ciphertext malleability. Take CTR mode, which is particularly easy to mess with. Let’s say we’ve obtained a ciphertext at step (4) (recall that

is the encryption of

), it’s trivially easy to “maul” the ciphertext — simply by flipping, say, a bit of the message (i.e., XORing it with “1”). This gives us a new ciphertext

that we are now allowed to submit for decryption. We are now allowed (by the rules of the game) to submit this ciphertext, and obtain

, which we can use to figure out

.

(A related, but “real world” variant of this attack is Vaudenay’s Padding Oracle Attack, which breaks actual implementations of symmetric-key cryptosystems. Here’s one we did against Apple iMessage. Here’s an older one on XML encryption.)

So how do we fix this problem? The straightforward observation is that we need to prevent the attacker from mauling the ciphertext . The generic approach to doing this is to modify the encryption scheme so that it includes a Message Authentication Code (MAC) tag computed over every CTR-mode ciphertext. The key for this MAC scheme is generated by the encrypting party (me) and kept with the encryption key. When asked to decrypt a ciphertext, the decryptor first checks whether the MAC is valid. If it’s not, the decryption routine will output “ERROR”. Assuming an appropriate MAC scheme, the attacker can’t modify the ciphertext (including the MAC) without causing the decryption to fail and produce a useless result.

So in short: in the symmetric encryption setting, the answer to CCA2 security is simply for the encrypting parties to authenticate each ciphertext using a secret authentication (MAC) key they generate. Since we’re talking about symmetric encryption, that extra (secret) authentication key can be generated and stored with the decryption key. (Some more efficient schemes make this all work with a single key, but that’s just an engineering convenience.) Everything works out fine.

So now we get to the big question.

CCA security is easy in symmetric encryption. Why can’t we just do the same thing for public-key encryption?

As we saw above, it turns out that strong authenticated encryption is sufficient to get CCA(2) security in the world of symmetric encryption. Sadly, when you try this same idea generically in public key encryption, it doesn’t always work. There’s a short reason for this, and a long one. The short version is: it matters who is doing the encryption.

Let’s focus on the critical difference. In the symmetric CCA2 game above, there is exactly one person who is able to (legitimately) encrypt ciphertexts. That person is me. To put it more clearly: the person who performs the legitimate encryption operations (and has the secret key) is also the same person who is performing decryption.

Even if the encryptor and decryptor aren’t literally the same person, the encryptor still has to be honest. (To see why this has to be the case, remember that the encryptor has shared secret key! If that party was a bad guy, then the whole scheme would be broken, since they could just output the secret key to the bad guys.) And once you’ve made the stipulation that the encryptor is honest, then you’re almost all the way there. It suffices simply to add some kind of authentication (a MAC or a signature) to any ciphertext she encrypts. At that point the decryptor only needs to determine whether any given ciphertexts actually came from the (honest) encryptor, and avoid decrypting the bad ones. You’re done.

Public key encryption (PKE) fundamentally breaks all these assumptions.

In a public-key encryption scheme, the main idea is that anyone can encrypt a message to you, once they get a copy of your public key. The encryption algorithm may sometimes be run by good, honest people. But it can also be run by malicious people. It can be run by parties who are adversarial. The decryptor has to be able to deal with all of those cases. One can’t simply assume that the “real” encryptor is honest.

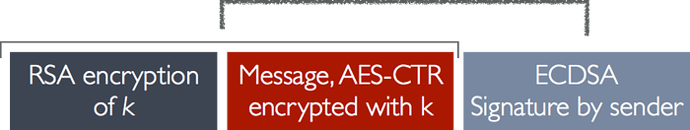

Let me give a concrete example of how this can hurt you. A couple of years ago I wrote a post about flaws in Apple iMessage, which (at the time) used simple authenticated (public key) encryption scheme. The basic iMessage encryption algorithm used public key encryption (actually a combination of RSA with some AES thrown in for efficiency) so that anyone could encrypt a message to my key. For authenticity, it required that every message be signed with an ECDSA signature by the sender.

When I received a message, I would look up the sender’s public key and first make sure the signature was valid. This would prevent bad guys from tampering with the message in flight — e.g., executing nasty stuff like adaptive chosen ciphertext attacks. If you squint a little, this is almost exactly a direct translation of the symmetric crypto approach we discussed above. We’re simply swapping the MAC for a digital signature.

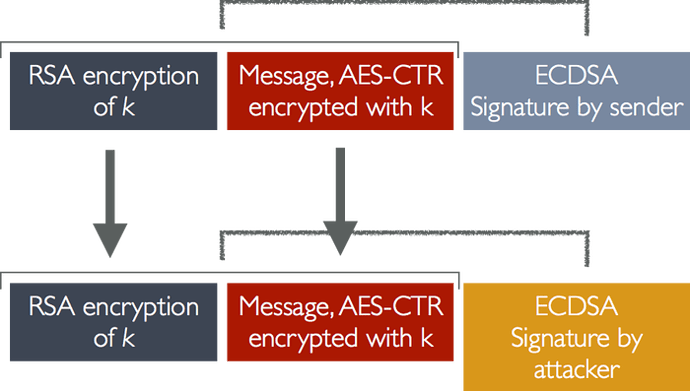

The problems with this scheme start to become apparent when we consider that there might be multiple people sending me ciphertexts. Let’s say the adversary is on the communication path and intercepts a signed message from you to me. They want to change (i.e., maul) the message so that they can execute some kind of clever attack. Well, it turns out this is simple. They simply rip off the honest signature and replace it one they make themselves:

The new message is identical, but now appears to come from a different person (the attacker). Since the attacker has their own signing key, they can maul the encrypted message as much as they want, and sign new versions of that message. If you plug this attack into (a version) of the public-key CCA2 game up top, you see they’ll win quite easily. All they have to do is modify the challenge ciphertext at step (4) to be signed with their own signing key, then they can change it by munging with the CTR mode encryption, and request the decryption of that ciphertext.

Of course if I only accept messages from signed by some original (guaranteed-to-be-honest) sender, this scheme might work out fine. But that’s not the point of public key encryption. In a real public-key scheme — like the one Apple iMessage was trying to build — I should be able to (safely) decrypt messages from anyone, and in that setting this naive scheme breaks down pretty badly.

Whew.

Ok, this post has gotten a bit long, and so far I haven’t actually gotten to the various “tricks” for adding chosen ciphertext security to real public key encryption schemes. That will have to wait until the next post, to come shortly.

Article Link: https://blog.cryptographyengineering.com/2018/04/21/wonk-post-chosen-ciphertext-security-in-public-key-encryption-part-1/