How to create BigQuery table with external data source(orc) ?

Image Source : Image taken by Avinash Prakash

Image Source : Image taken by Avinash PrakashIntroduction :

Big Query External table take advantage of data stored on various google storage(GCP buckets, Datastore, Firestore) to save storage and transfer cost associated with data transfer from other google storage to Big query internal storage(Colossus).

BigQuery supports querying Cloud Storage data in the following formats:

· Comma-separated values (CSV)

· JSON (newline-delimited)

· Avro

· ORC

· Parquet

· Datastore exports

· Firestore exports

BigQuery supports querying Cloud Storage data from these storage classes:

. Standard

. Nearline

. Coldline

. Archive

External vs Native tables :

1. External tables scan the data from Cloud Storage as your data source- this has the advantage of always querying the latest data. However, while it’s possible to limit the amount of data scanned, external tables don’t take advantage of caching, and queries can be slower.

2. Native tables import the data into BigQuery and allow you to query from there. There are no data transfer charges from Cloud Storage but the normal charges per scanned data apply. With native tables, you can only see the data you imported when you created the table — which is a manual process. Queries will likely be faster than for external tables.

How to create table on externally partitioned ORC data :

Create Table Using BQ Console :

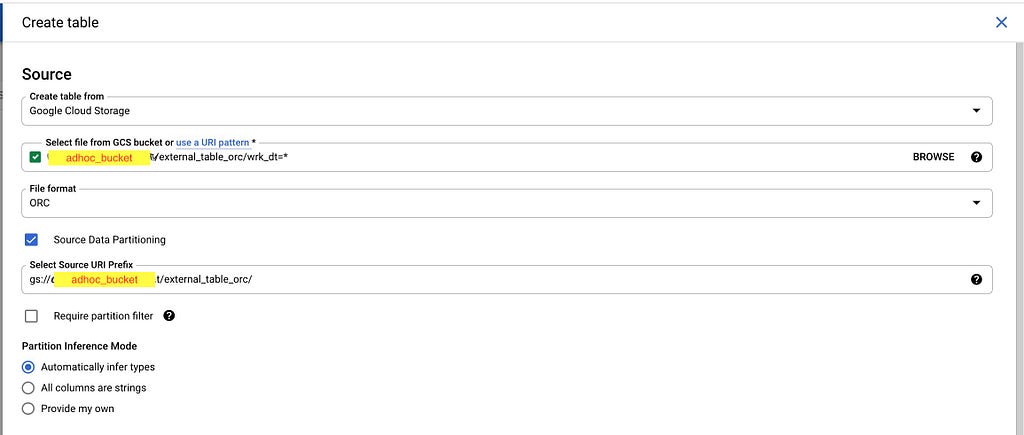

Create table google console part 1

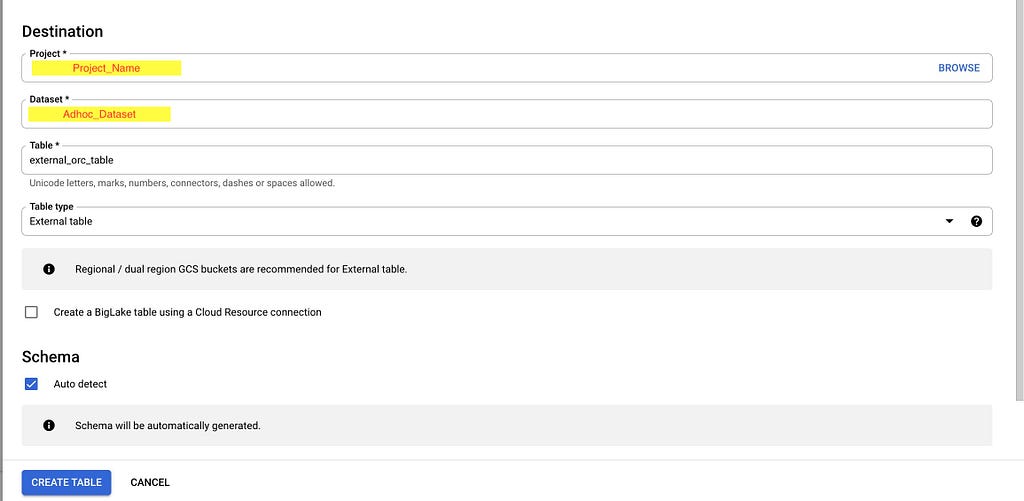

Create table google console part 1 Create table google console part 2

Create table google console part 2Some important points to highlight :

1. Select “Google Cloud Storage” as the option under “Create Table from”. In “Select file from GCS bucket”, just provide the bucket name with date=*(first partition as per your table) and suffix. In our example it will be “gs://adhoc_bucket/external_table_orc/date=*” . Under “file_format”, select as “ORC”

2. Select the checkbox for “Source Data Partitioning”. Provide the “Select Source URI Prefix” as “gs://adhoc_bucket/external_table_orc/” which is location of table in bucket and select “Partition Inference Mode” as “Automatically infer types”.

3. Select the “Table type” as “External table”

4. Select the checkbox for “Auto detect Schema”. This will enable to auto detect the column names and data types. There is option to manually provide the schema in case if you don’t want to infer schema from DataSource. Just unselect the “Auto Detect” and add the Schema details.

5. Finally click on the create table button to create the external table.

External Table Info for AUTO partition table:

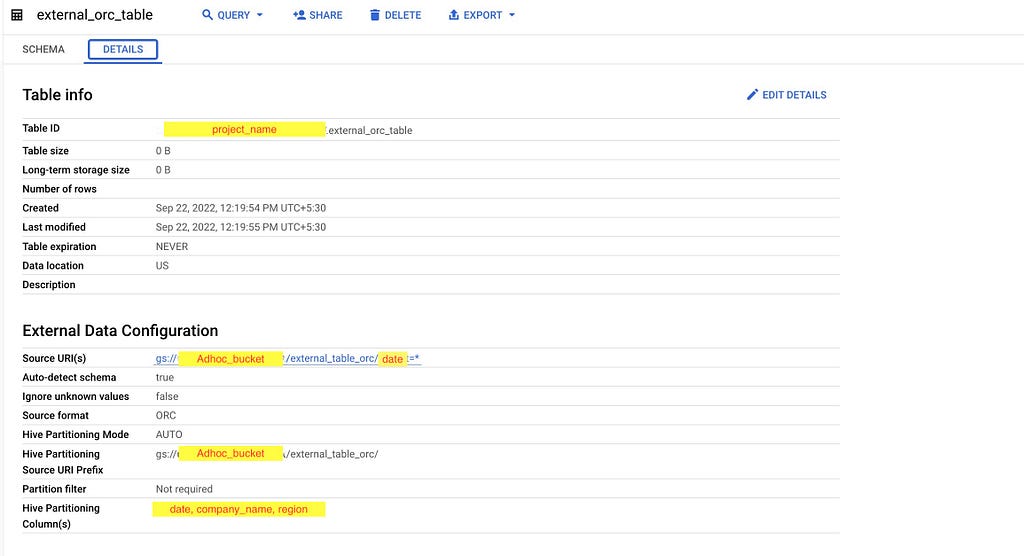

External Table Info

External Table InfoImportant points to highlight :

1. Table Size : 0 B

2. Table is not storing any data in BigQuery storage.

3. Source URI : gs://adhoc_bucket/external_table_orc/date=*

4. Table data is located at above GCS bucket location.

5. Source Format is ORC and Hive partitioning mode is auto

Create Table BQ DDL Using and Custom Partition :

Below Query creates table from GCS bucket with partition column work_date, company_code, region_code and other columns gets automatically inferred from Hive table meta data.

CREATE OR REPLACE EXTERNAL TABLE Project_Name.Adhoc_dateset.external_orc_table_DDL

WITH PARTITION COLUMNS (

date DATE,

company_name STRING,

region STRING

)OPTIONS (uris=[‘gs://adhoc_bucket/external_table_orc/date=*’],

format=orc,

hive_partition_uri_prefix=’gs://adhoc_bucket/external_table_orc/’);

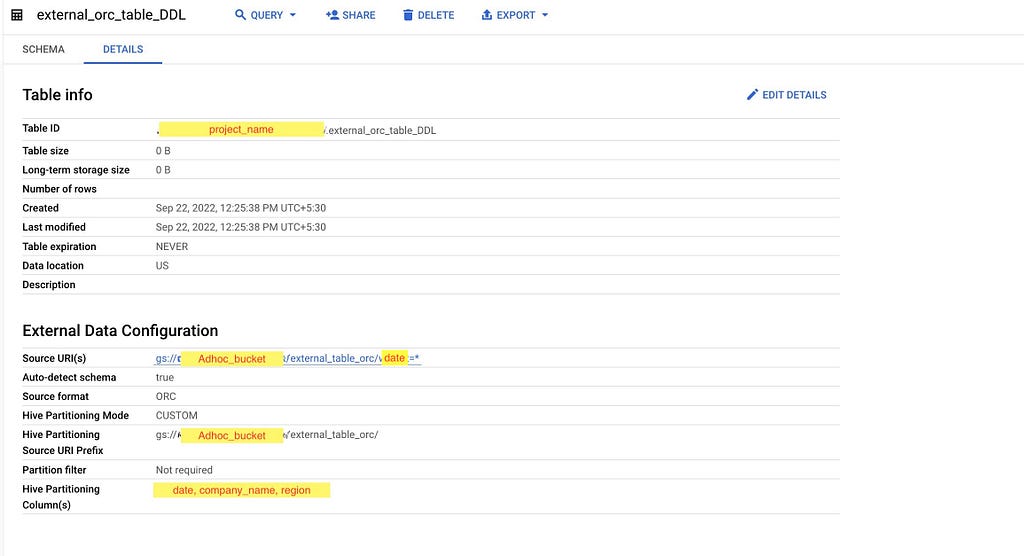

External Table DDL Properties

External Table DDL PropertiesIf you look at the above screenshot closely you will notice that all the details are same except the Hive Partition Mode(custom).

Conclusion :

- BQ external table can be used in case if we want to do quick analysis on data present in google buckets without copying huge amount of data to BQ internal storage.

- BQ external table can be used for rapidly changing reference data present on google bucket or any other BQ external table support data data storage.

- It can also be used in case if we want to extract data from RAW files stored on google bucket and later load it to BQ internal table.

References :

Introduction to external tables | BigQuery | Google Cloud

Image sources : All images are captured during Walmart internal sandbox environment experimentation.

How to create BigQuery table with external data source(orc) ? was originally published in Walmart Global Tech Blog on Medium, where people are continuing the conversation by highlighting and responding to this story.