This article is the third in a series of four articles on the work we’ve been doing for the European Union’s Horizon 2020 project codenamed SHERPA. Each of the articles in this series contain excerpts from a publication entitled “Security Issues, Dangers And Implications Of Smart Systems”. For more information about the project, the publication itself, and this series of articles, check out the intro post here.

This article explains how attacks against machine learning models work, and provides a number of interesting examples of potential attacks against systems that utilize machine learning methodologies.

Introduction

Machine learning models are being used to make automated decisions in more and more places around us. As a result of this, human involvement in decision processes will continue to decline. It is only natural to assume that adversaries will eventually become interested in learning how to fool machine learning models. Indeed, this process is well underway. Search engine optimization attacks, which have been conducted for decades, are a prime example. The algorithms that drive social network recommendation systems have also been under attack for many years. On the cyber security front, adversaries are constantly developing new ways to fool spam filtering and anomaly detection systems. As more systems adopt machine learning techniques, expect to see new, previously un-thought-of attacks surface.

This article details how attacks against machine learning systems work, and how they might be used for malicious purposes.

Types of attacks against AI systems

Depending on the adversary’s access, attacks against machine learning models can be launched in either ‘white box’ or ‘black box’ mode.

White Box Attacks

White-box attack methods assume the adversary has direct access to a model, i.e. the adversary has local access to the code, the model’s architecture, and the trained model’s parameters. In some cases, the adversary may also have access to the data set that was used to train the model. White-box attacks are commonly used in academia to demonstrate attack-crafting methodologies.

Black Box Attacks

Black box attacks assume no direct access to the target model (in many cases, access is limited to performing queries, via a simple interface on the Internet, to a service powered by a machine learning model), and no knowledge of its internals, architecture, or the data used to train the model. Black box attacks work by performing iterative queries against the target model and observing its outputs, in order to build a copy of the target model. White box attack techniques are then performed on that copy.

Techniques that fall between white box and black box attacks also exist. For instance, a standard pre-trained model similar to the target can be downloaded from the Internet, or a model similar to the target can be built and trained by an attacker. Attacks developed against an approximated model often work well against the target model, even if the approximated model is architecturally different to the target model, and even if both models were trained with different data (assuming the complexity of both models is similar).

Attack classes

Attacks against machine learning models can be divided into four main categories based on the motive of the attacker.

Confidentiality attacks expose the data that was used to train the model. Confidentiality attacks can be used to determine whether a particular input was used during the training of the model.

Scenario: obtain confidential medical information about a high-profile individual for blackmail purposes

An adversary obtains publicly available information about a politician (such as name, social security number, address, name of medical provider, facilities visited, etc.), and through an inference attack against a medical online intelligent system, is able to ascertain that the politician has been hiding a long-term medical disorder. The adversary blackmails the politician. This is a confidentiality attack.

Integrity attacks cause a model to behave incorrectly due to tampering with the training data. These attacks include model skewing (subtly retraining an online model to re-categorize input data), and supply chain attacks (tampering with training data while a model is being trained off-line). Adversaries employ integrity attacks when they want certain inputs to be miscategorised by the poisoned model. Integrity attacks can be used, for instance, to avoid spam or malware classification, to bypass network anomaly detection, to discredit the model / SIS owner, or to cause a model to incorrectly promote a product in an online recommendation system.

Scenario: discredit a company or brand by poisoning a search engine’s auto-complete functionality

An adversary employs a Sybil attack to poison a web browser’s auto-complete function so that it suggests the word “fraud” at the end of an auto-completed sentence with a target company name in it. The targeted company doesn’t notice the attack for some time, but eventually discovers the problem and corrects it. However, the damage is already done, and they suffer long-term negative impact on their brand image. This is an integrity attack (and is possible today).

Availability attacks refer to situations where the availability of a machine learning model to output a correct verdict is compromised. Availability attacks work by subtly modifying an input such that, to a human, the input seems unchanged, but to the model, it looks completely different (and thus the model outputs an incorrect verdict). Availability attacks can be used to ‘disguise’ an input in order to evade proper classification, and can be used to, for instance, defeat parental control software, evade content classification systems, or provide a way of bypassing visual authentication systems (such a facial or fingerprint recognition). From the attacker’s goal point of view, availability attacks are similar to integrity ones, but the techniques are different: poisoning the model vs. crafting the inputs.

Scenario: trick a self-driving vehicle

An adversary introduces perturbations into an environment, causing self-driving vehicles to misclassify objects around them. This is achieved by, for example, applying stickers or paint to road signs, or projecting images using light or laser pointers. This attack may cause vehicles to ignore road signs, and potentially crash into other vehicles or objects, or cause traffic jams by fooling vehicles into incorrectly determining the colour of traffic lights. This is an availability attack.

Replication attacks allow an adversary to copy or reverse-engineer a model. One common motivation for replication attacks is to create copy (or substitute) models that can then be used to craft attacks against the original system, or to steal intellectual property.

Scenario: steal intellectual property

An adversary employs a replication attack to reverse-engineer a commercial machine-learning based system. Using this stolen intellectual property, they set up a competing company, thus preventing the original company from earning all the revenue they expected to. This is a replication attack.

Availability attacks against classifiers

Classifiers are a type of machine learning model designed to predict the label of an input (for instance, when a classifier receives an image of a dog, it will output a value indicative of having detected a dog in that image). Classifiers are some of the most common machine learning systems in use today, and are used for a variety of purposes, including web content categorization, malware detection, credit risk analysis, sentiment analysis, object recognition (for instance, in self-driving vehicles), and satellite image analysis. The widespread nature of classifiers has given rise to a fair amount of research on the susceptibility of these systems to attack, and possible mitigations against those attacks.

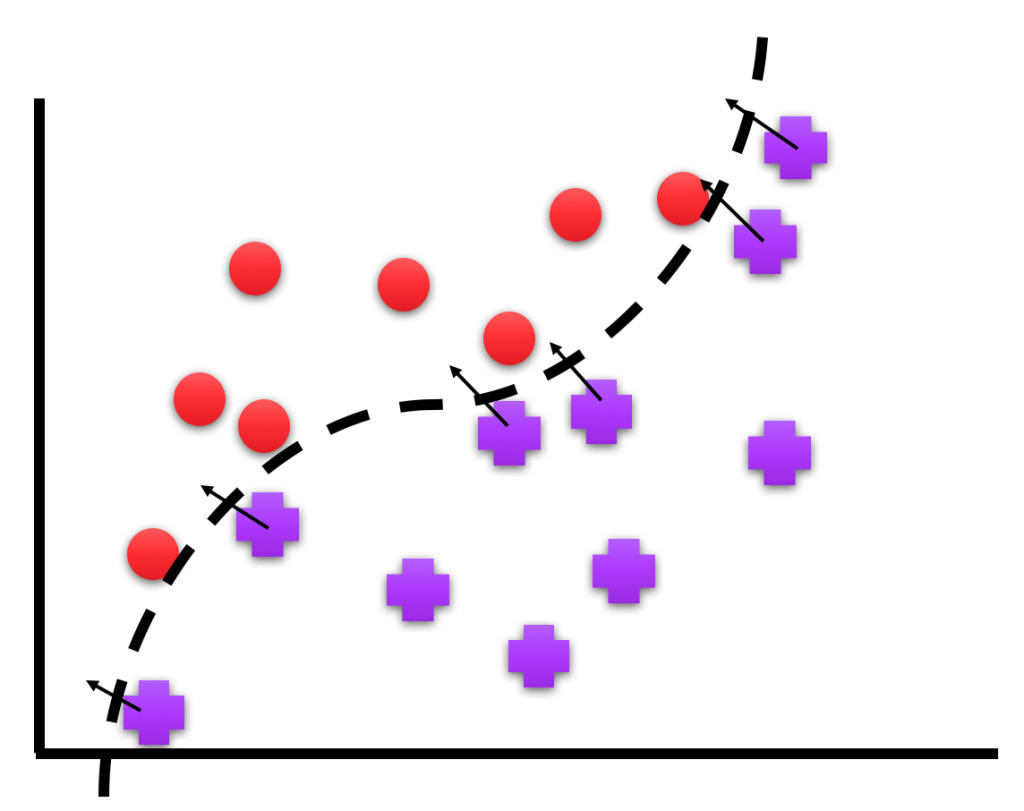

Classifier models often partition data by learning decision boundaries between data points during the training process.

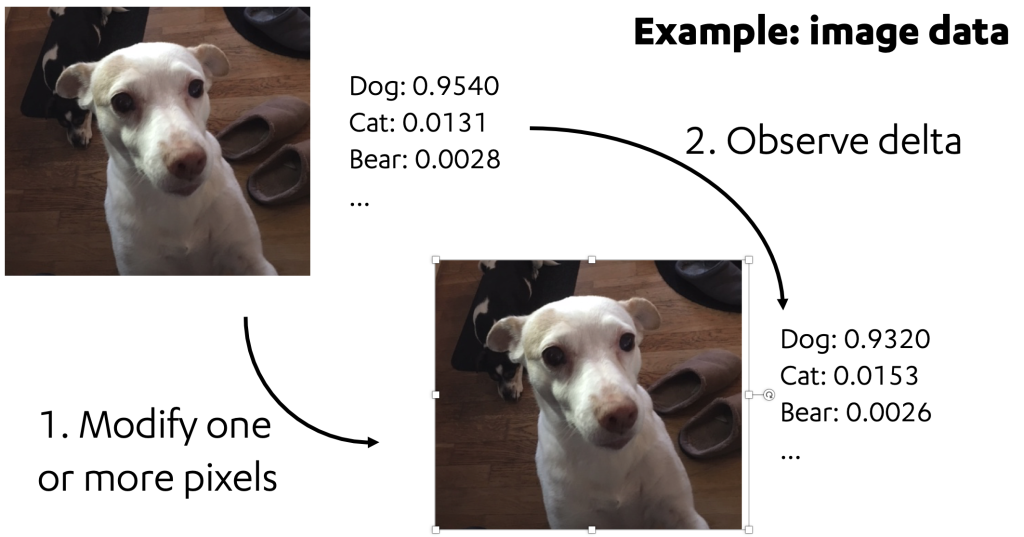

Adversarial samples can be created by examining these decision boundaries and learning how to modify an input sample such that data points in the input cross these decision boundaries. In white box attacks, this is done by iteratively applying small changes to a test input, and observing the output of the target model until a desired output is reached. In the example below, notice how the value for “dog” decreases, while the value for “cat” increases after a perturbation is applied. An adversary wishing to misclassify this image as “cat” will continue to modify the image until the value for “cat” exceeds the value for “dog”.

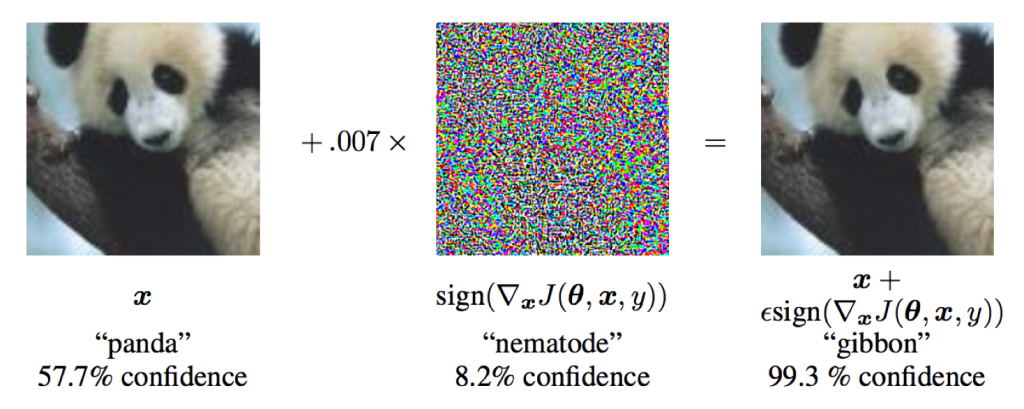

The creation of adversarial samples often involves first building a ‘mask’ that can be applied to an existing input, such that it tricks the model into producing the wrong output. In the case of adversarially created image inputs, the images themselves appear unchanged to the human eye. The below illustration depicts this phenomenon. Notice how both images still look like pandas to the human eye, yet the machine learning model classifies the right-hand image as “gibbon”.

Source: Explaining and Harnessing Adversarial Examples, Ian J. Goodfellow, Jonathon Shlens & Christian Szegedy, https://arxiv.org/abs/1412.6572

Adversarial samples created in this way can even be used to fool a classifier when the image is printed out, and a photo is taken of the printout. Even simpler methods have been found to create adversarial images. In February 2018, research was published demonstrating that scaled and rotated images can cause misclassification. In February 2018, researchers at Kyushu University discovered a number of one-pixel attacks against image classifiers. The ease, and the number of ways in which adversarial samples designed to fool image recognition models can be created, illustrates that should these models be used to make important decisions (such as in content filtering systems), mitigations (described in the fourth article in this eries) should be carefully considered before production deployment.

Scenario: bypass content filtering system

An attacker submits adversarially altered pornographic ad banners to a popular, well-reputed ad provider service. The submitted images bypass their machine learning-based content filtering system. The pornographic ad banner is displayed on frequently visited high-profile websites. As a result, minors are exposed to images that would usually have been blocked by parental control software. This is an availability attack.

Researchers have recently demonstrated that adversarial samples can be crafted for areas other than image classification. In August 2018, a group of researchers at the Horst Görtz Institute for IT Security in Bochum, Germany, crafted psychoacoustic attacks against speech recognition systems, allowing them to hide voice commands in audio of birds chirping. The hidden commands were not perceivable to the human ear, so the audio tracks were perceived differently by humans and machine-learning-based systems.

Scenario: perform a targeted attack against an individual using hidden voice commands

An attacker embeds hidden voice commands into video content, uploads it to a popular video sharing service, and artificially promotes the video (using a Sybil attack). When the video is played on the victim’s system, the hidden voice commands successfully instruct a digital home assistant device to purchase a product without the owner knowing, instruct smart home appliances to alter settings (e.g. turn up the heat, turn off the lights, or unlock the front door), or to instruct a nearby computing device to perform searches for incriminating content (such as drugs or child pornography) without the owner’s knowledge (allowing the attacker to subsequently blackmail the victim). This is an availability attack.

Scenario: take widespread control of digital home assistants

An attacker forges a ‘leaked’ phone call depicting plausible scandalous interaction involving high-ranking politicians and business people. The forged audio contains embedded hidden voice commands. The message is broadcast during the evening news on national and international TV channels. The attacker gains the ability to issue voice commands to home assistants or other voice recognition control systems (such as Siri) on a potentially massive scale. This is an availability attack.

Availability attacks against natural language processing systems

Natural language processing (NLP) models are used to parse and understand human language. Common uses of NLP include sentiment analysis, text summarization, question/answer systems, and the suggestions you might be familiar with in web search services. In an anonymous submission to ICLR (the International Conference on Learning Representations) during 2018, a group of researchers demonstrated techniques for crafting adversarial samples to fool natural language processing models. Their work showed how to replace words with synonyms in order to bypass spam filtering, change the outcome of sentiment analysis, and fool a fake news detection model. Similar results were reported by a group of researchers at UCLA in April, 2018.

Scenario: evade fake news detection systems to alter political discourse

Fake news detection is a relatively difficult problem to solve with automation, and hence, fake news detection solutions are still in their infancy. As these techniques improve and people start to rely on verdicts from trusted fake news detection services, tricking such services infrequently, and at strategic moments would be an ideal way to inject false narratives into political or social discourse. In such a scenario, an attacker would create a fictional news article based on current events, and adversarially alter it to evade known respected fake news detection systems. The article would then find its way into social media, where it would likely spread virally before it can be manually fact-checked. This is an availability attack.

Scenario: trick automated trading algorithms that rely on sentiment analysis

Over an extended period of time, an attacker publishes and promotes a series of adversarially created social media messages designed to trick sentiment analysis classifiers used by automated trading algorithms. One or more high-profile trading algorithms trade incorrectly over the course of the attack, leading to losses for the parties involved, and a possible downturn in the market. This is an availability attack.

Availability attacks – reinforcement learning

Reinforcement learning is the process of training an agent to perform actions in an environment. Reinforcement learning models are commonly used by recommendation systems, self-driving vehicles, robotics, and games. Reinforcement learning models receive the current environment’s state (e.g. a screenshot of the game) as an input, and output an action (e.g. move joystick left). In 2017, researchers at the University of Nevada published a paper illustrating how adversarial attacks can be used to trick reinforcement learning models into performing incorrect actions. Similar results were later published by Ian Goodfellow’s team at UC Berkeley.

Two distinct types of attacks can be performed against reinforcement learning models.

A strategically timed attack modifies a single or small number of input states at a key moment, causing the agent to malfunction. For instance, in the game of pong, if a strategic attack is performed as the ball approaches the agent’s paddle, the agent will move its paddle in the wrong direction and miss the ball.

An enchanting attack modifies a number of input states in an attempt to “lure” the agent away from a goal. For instance, an enchanting attack against an agent playing Super Mario could lure the agent into running on the spot, or moving backwards instead of forwards.

Scenario: hijack autonomous military drones

By use of an adversarial attack against a reinforcement learning model, autonomous military drones are coerced into attacking a series of unintended targets, causing destruction of property, loss of life, and the escalation of a military conflict. This is an availability attack.

Scenario: hijack an autonomous delivery drone

By use of a strategically timed policy attack, an attacker fools an autonomous delivery drone to alter course and fly into traffic, fly through the window of a building, or land (such that the attacker can steal its cargo, and perhaps the drone itself). This is an availability attack.

Availability attacks – wrap up

The processes used to craft attacks against classifiers, NLP systems, and reinforcement learning agents are similar. As of writing, all attacks crafted in these domains have been purely academic in nature, and we have not read about or heard of any such attacks being used in the real world. However, tooling around these types of attacks is getting better, and easier to use. During the last few years, machine learning robustness toolkits have appeared on github. These toolkits are designed for developers to test their machine learning implementations against a variety of common adversarial attack techniques. IBM Adversarial Robustness Toolbox, developed by IBM, contains implementations of a wide variety of common evasion attacks and defence methods, and is freely available on github. Cleverhans, a tool developed by Ian Goodfellow and Nicolas Papernot, is a Python library to benchmark machine learning systems’ vulnerability to adversarial examples. It is also freely available on github.

Replication attacks: transferability attacks

Transferability attacks are used to create a copy of a machine learning model (a substitute model), thus allowing an attacker to “steal” the victim’s intellectual property, or craft attacks against the substitute model that work against the original model. Transferability attacks are straightforward to carry out, assuming the attacker has unlimited ability to query a target model.

In order to perform a transferability attack, a set of inputs are crafted, and fed into a target model. The model’s outputs are then recorded, and that combination of inputs and outputs are used to train a new model. It is worth noting that this attack will work, within reason, even if the substitute model is not of absolutely identical architecture to the target model.

It is possible to create a ‘self-learning’ attack to efficiently map the decision boundaries of a target model with relatively few queries. This works by using a machine learning model to craft samples that are fed as input to the target model. The target model’s outputs are then used to guide the training of the sample crafting model. As the process continues, the sample crafting model learns to generate samples that more accurately map the target model’s decision boundaries.

Confidentiality attacks: inference attacks

Inference attacks are designed to determine the data used during the training of a model. Some machine learning models are trained against confidential data such as medical records, purchasing history, or computer usage history. An adversary’s motive for performing an inference attack might be out of curiosity – to simply study the types of samples that were used to train a model – or malicious intent – to gather confidential data, for instance, for blackmail purposes.

A black box inference attack follows a two-stage process. The first stage is similar to the transferability attacks described earlier. The target model is iteratively queried with crafted input data, and all outputs are recorded. This recorded input/output data is then used to train a set of binary classifier ‘shadow’ models – one for each possible output class the target model can produce. For instance, an inference attack against an image classifier than can identify ten different types of images (cat, dog, bird, car, etc.) would create ten shadow models – one for cat, one for dog, one for bird, and so on. All inputs that resulted in the target model outputting “cat” would be used to train the “cat” shadow model, and all inputs that resulted in the target model outputting “dog” would be used to train the “dog” shadow model, etc.

The second stage uses the shadow models trained in the first step to create the final inference model. Each separate shadow model is fed a set of inputs consisting of a 50-50 mixture of samples that are known to trigger positive and negative outputs. The outputs produced by each shadow model are recorded. For instance, for the “cat” shadow model, half of the samples in this set would be inputs that the original target model classified as “cat”, and the other half would be a selection of inputs that the original target model did not classify as “cat”. All inputs and outputs from this process, across all shadow models, are then used to train a binary classifier that can identify whether a sample it is shown was “in” the original training set or “out” of it. So, for instance, the data we recorded while feeding the “cat” shadow model different inputs, would consist of inputs known to produce a “cat” verdict with the label “in”, and inputs known not to produce a “cat” verdict with the label “out”. A similar process is repeated for the “dog” shadow model, and so on. All of these inputs and outputs are used to train a single classifier that can determine whether an input was part of the original training set (“in”) or not (“out”).

This black box inference technique works very well against models generated by online machine-learning-as-a-service offerings, such as those available from Google and Amazon. Machine learning experts are in low supply and high demand. Many companies are unable to attract machine learning experts to their organizations, and many are unwilling to fund in-house teams with these skills. Such companies will turn to machine-learning-as-a-service’s simple turnkey solutions for their needs, likely without the knowledge that these systems are vulnerable to such attacks.

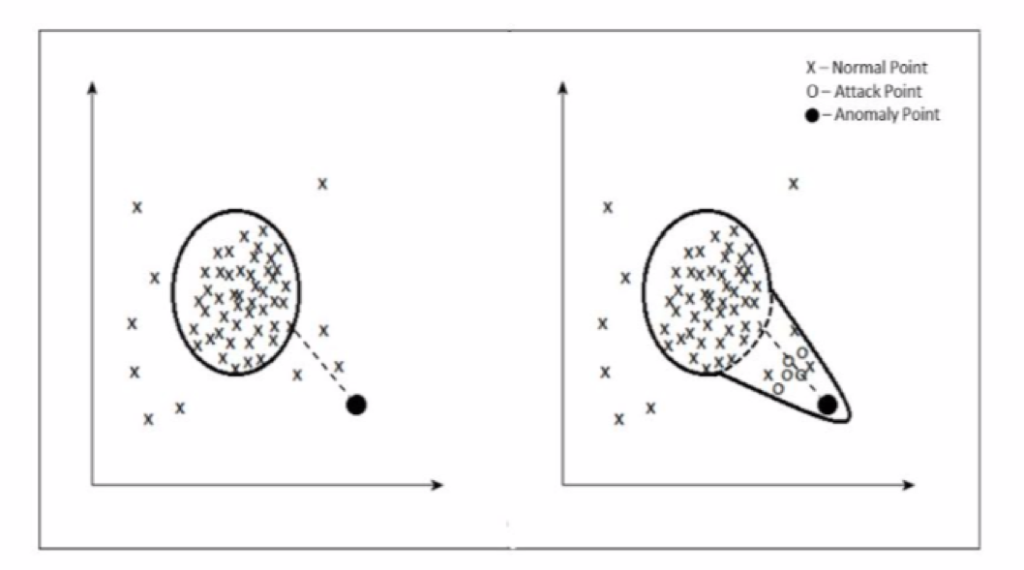

Poisoning attacks against anomaly detection systems

Anomaly detection algorithms are employed in areas such as credit card fraud prevention, network intrusion detection, spam filtering, medical diagnostics, and fault detection. Anomaly detection algorithms flag anomalies when they encounter data points occurring far enough away from the ‘centers of mass’ of clusters of points seen so far. These systems are retrained with newly collected data on a periodic basis. As time goes by, it can become too expensive to train models against all historical data, so a sliding window (based on sample count or date) may be used to select new training data.

Poisoning attacks work by feeding data points into these systems that slowly shift the ‘center of mass’ over time. This process is often referred to as a boiling frog strategy. Poisoned data points introduced by the attacker become part of periodic retraining data, and eventually lead to false positives and false negatives, both of which render the system unusable.

Attacks against recommenders

Recommender systems are widely deployed by web services (e.g., YouTube, Amazon, and Google News) to recommend relevant items to users, such as products, videos, and news. Some examples of recommender systems include:

- YouTube recommendations that pop up after you watch a video

- Amazon “people who bought this also bought…”

- Twitter “you might also want to follow” recommendations that pop up when you engage with a tweet, perform a search, follow an account, etc.

- Social media curated timelines

- Netflix movie recommendations

- App store purchase recommendations

Recommenders are implemented in various ways:

Recommendation based on user similarity

This technique finds users most similar to a target user, based on items they’ve interacted with. They then predict the target user’s rating scores for other items based on the rating scores of those similar users. For instance, if user A and user B both interacted with item 1, and user B also interacted with item 2, recommend item 2 to user A.

Recommendation based on item similarity

This technique finds common interactions between items and then recommends a target user items based on those interactions. For instance, if many users have interacted with both items A and B, then if a target user interacts with item A, recommended B.

Recommendation based on both user and item similarity

These techniques use a combination of both user and item similarity-matching logic. This can be done in a variety of ways. For instance, rankings for items a target user has not interacted with yet are predicted via a ranking matrix generated from interactions between users and items that the target already interacted with.

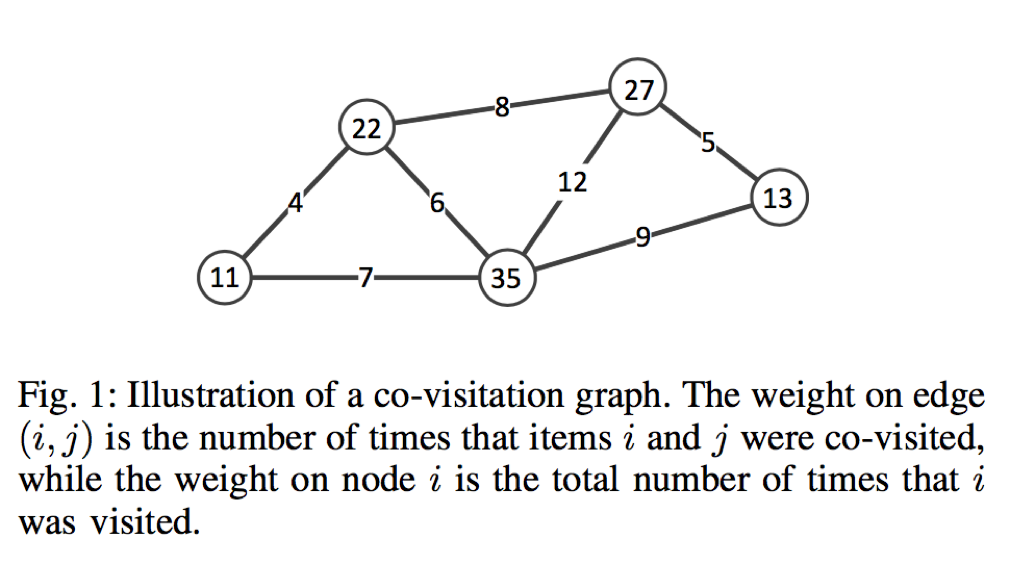

An underlying mechanism in many recommendation systems is the co-visitation graph. It consists of a set of nodes and edges, where nodes represent items (products, videos, users, posts) and edge weights represent the number of times a combination of items were visited by the same user.

The most widely used attacks against recommender systems are Sybil attacks (which are integrity attacks, see above). The attack process is simple – an adversary creates several fake users or accounts, and has them engage with items in patterns designed to change how that item is recommended to other users. Here, the term ‘engage’ is dependent on the system being attacked, and could include rating an item, reviewing a product, browsing a number of items, following a user, or liking a post. Attackers may probe the system using ‘throw-away’ accounts in order to determine underlying mechanisms, and to test detection capabilities. Once an understanding of the system’s underlying mechanisms has been acquired, the attacker can leverage that knowledge to perform efficient attacks on the system (for instance, based on knowledge of whether the system is using co-visitation graphs). Skilled attackers carefully automate their fake users to behave like normal users in order to avoid Sybil attack detection techniques.

Motives include:

- promotion attacks – trick a recommender system into promoting a product, piece of content, or user to as many people as possible

- demotion attacks – cause a product, piece of content, or user to not be promoted as much as it should

- social engineering – in theory, if an adversary already has knowledge on how a specific user has interacted with items in the system, an attack can be crafted to target that user with a recommendation such as a YouTube video, malicious app, or imposter account to follow.

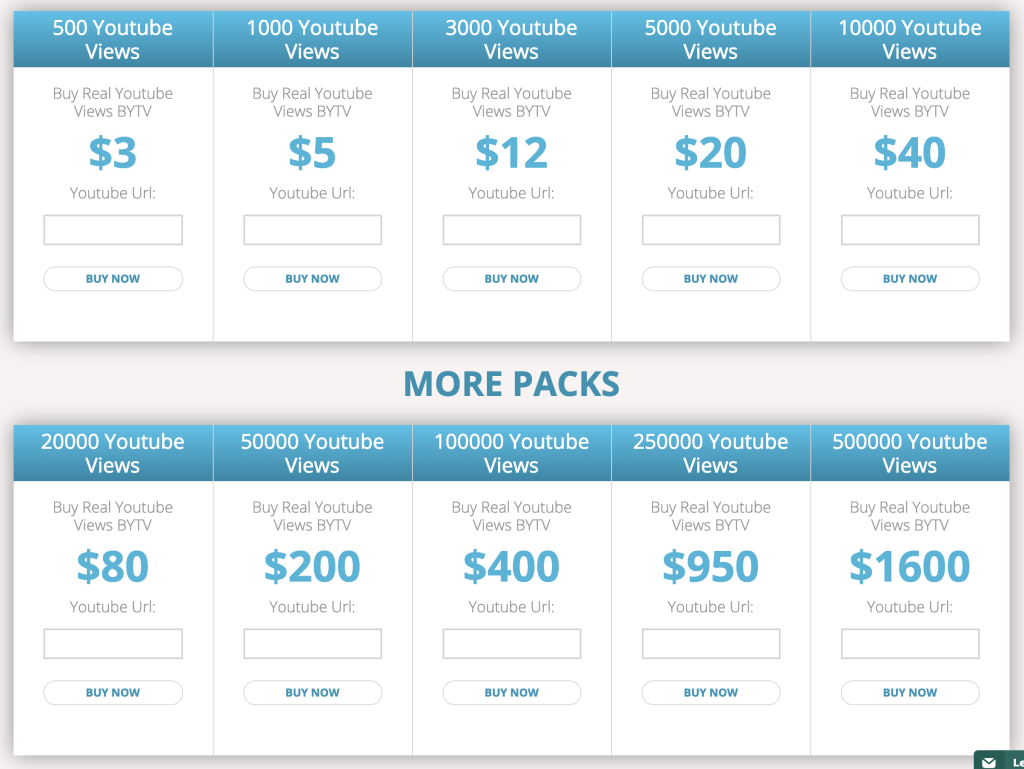

Numerous attacks are already being performed against recommenders, search engines, and other similar online services. In fact, an entire industry exists to support these attacks. With a simple web search, it is possible to find inexpensive purchasable services to poison app store ratings, restaurant rating systems, and comments sections on websites and YouTube, inflate online polls, and engagement (and thus visibility) of content or accounts, and manipulate autocomplete and search results.

The prevalence and cost of such services indicates that they are probably widely used. Maintainers of social networks, e-commerce sites, crowd-sourced review sites, and search engines must be able to deal with the existence of these malicious services on a daily basis. Detecting attacks on this scale is non-trivial and takes more than rules, filters, and algorithms. Even though plenty of manual human labour goes into detecting and stopping these attacks, many of them go unnoticed.

From celebrities inflating their social media profiles by purchasing followers, to Cambridge Analytica’s reported involvement in meddling with several international elections, to a non-existent restaurant becoming London’s number one rated eatery on TripAdvisor, to coordinated review brigading ensuring that conspiratorial literature about vaccinations and cancer were highly recommended on Amazon , to a plethora of psy-ops attacks launched by the alt-right, high profile examples of attacks on social networks are becoming more prevalent, interesting, and perhaps disturbing. These attacks are often discovered long after the fact, when the damage is already done. Identifying even simple attacks while they are ongoing is extremely difficult, and there is no doubt many attacks are ongoing at this very moment.

Attacks against federated learning systems

Federated learning is a machine learning setting where the goal is to train a high-quality centralized model based on models locally trained in a potentially large number of clients, thus, avoiding the need to transmit client data to the central location. A common application of federated learning is text prediction in mobile phones. Each phone contains a local machine learning model that learns from its user (for instance, which recommended word they clicked on). The phone transmits its learning (the phone’s model’s weights) to an aggregator system, and periodically receives a new model trained on the learning from all of the other phones participating.

Attacks against federated learning can be viewed as poisoning or supply chain (integrity) attacks. A number of Sybils, designed to poison the main model, are inserted into a federated learning network. These Sybils collude to transmit incorrectly trained model weights back to the aggregator which, in turn, pushes poisoned models back to the rest of the participants. For a federated text prediction system, a number of Sybils could be used to perform an attack that causes all participants’ phones to suggest incorrect words in certain situations. The ultimate solution to preventing attacks in federated learning environments is to find a concrete method of establishing and maintaining trust amongst the participants of the network, which is clearly very challenging.

Conclusion

The understanding of flaws and vulnerabilities inherent in the design and implementation of systems built on machine learning and the means to validate those systems and to mitigate attacks against them are still in their infancy, complicated – in comparison with traditional systems – by the lack of explainability to the user, heavy dependence on training data, and oftentimes frequent model updating. This field is attracting the attention of researchers, and is likely to grow in the coming years. As understanding in this area improves, so too will the availability and ease-of-use of tools and services designed for attacking these systems.

As artificial-intelligence-powered systems become more prevalent, it is natural to assume that adversaries will learn how to attack them. Indeed, some machine-learning-based systems in the real world have been under attack for years already. As we witness today in conventional cyber security, complex attack methodologies and tools initially developed by highly resourced threat actors, such as nation states, eventually fall into the hands of criminal organizations and then common cyber criminals. This same trend can be expected for attacks developed against machine learning models.

This concludes the third article in this series. The fourth and final article explores currently proposed methods for hardening machine learning systems against adversarial attacks

Article Link: https://labsblog.f-secure.com/2019/07/11/adversarial-attacks-against-ai/